Product

One real place, packaged as a robot evaluation world model.

Blueprint starts with real capture of a facility or public-facing place, then turns it into a site-specific world model with package files, hosted evaluation, and proof boundaries attached.

Preview

A cold visitor can see the product shape quickly.

Start with a real site. Then choose whether your team needs package files, a managed hosted evaluation, or a narrower capture request.

Sample review packet

A sample shows the path without pretending to be customer proof.

The grocery route is a sample. It shows the motion: a capturer records an everyday place, Blueprint reviews privacy and restrictions, and a robot team gets evidence it can evaluate.

Capture

Record a public-facing route from common areas and submit it for review.

Review

Privacy, rights, restrictions, and usefulness are checked before buyer framing.

Buyer room

Robot teams compare run evidence, limits, and export scope before committing.

Cedar Market Aisle Loop

Grocery store public aisles / $40 review cue, 25 minute walkthrough

Harbor Mall Common Corridor

Shopping mall common areas / $45 review cue, 30 minute walkthrough

Northline Hotel Lobby Loop

Hotel lobby and public common areas / $80 review cue, 40 minute walkthrough

Atlas Retail Service Aisle

Retail store public aisles / $40 review cue, 25 minute walkthrough

Session

What happens in a hosted evaluation.

The setup stays narrow so the result can answer a robot-team question instead of becoming a generic demo.

Scope

Name one listing or facility, one workflow, and the robot setup or policy question that matters.

Run

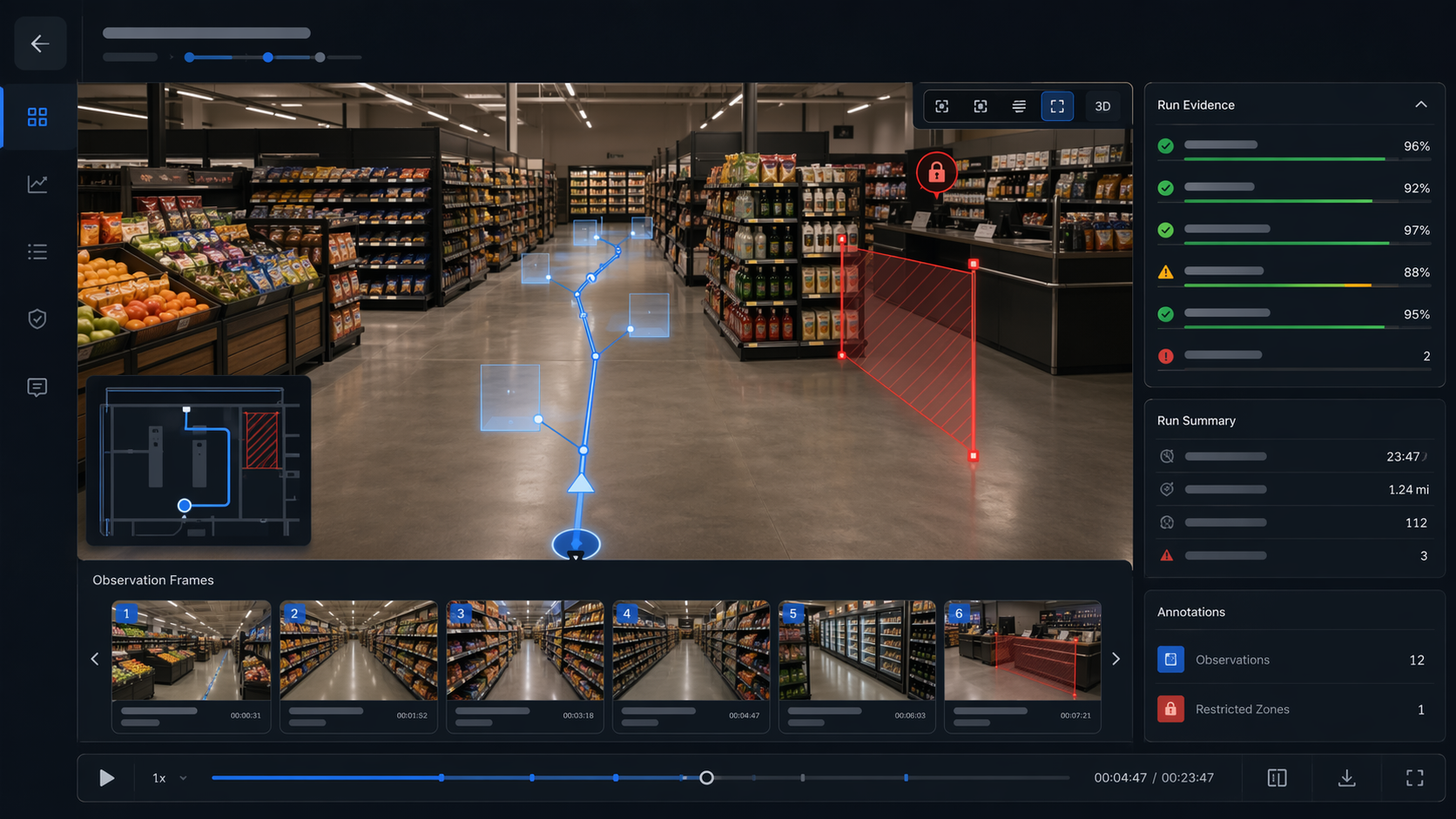

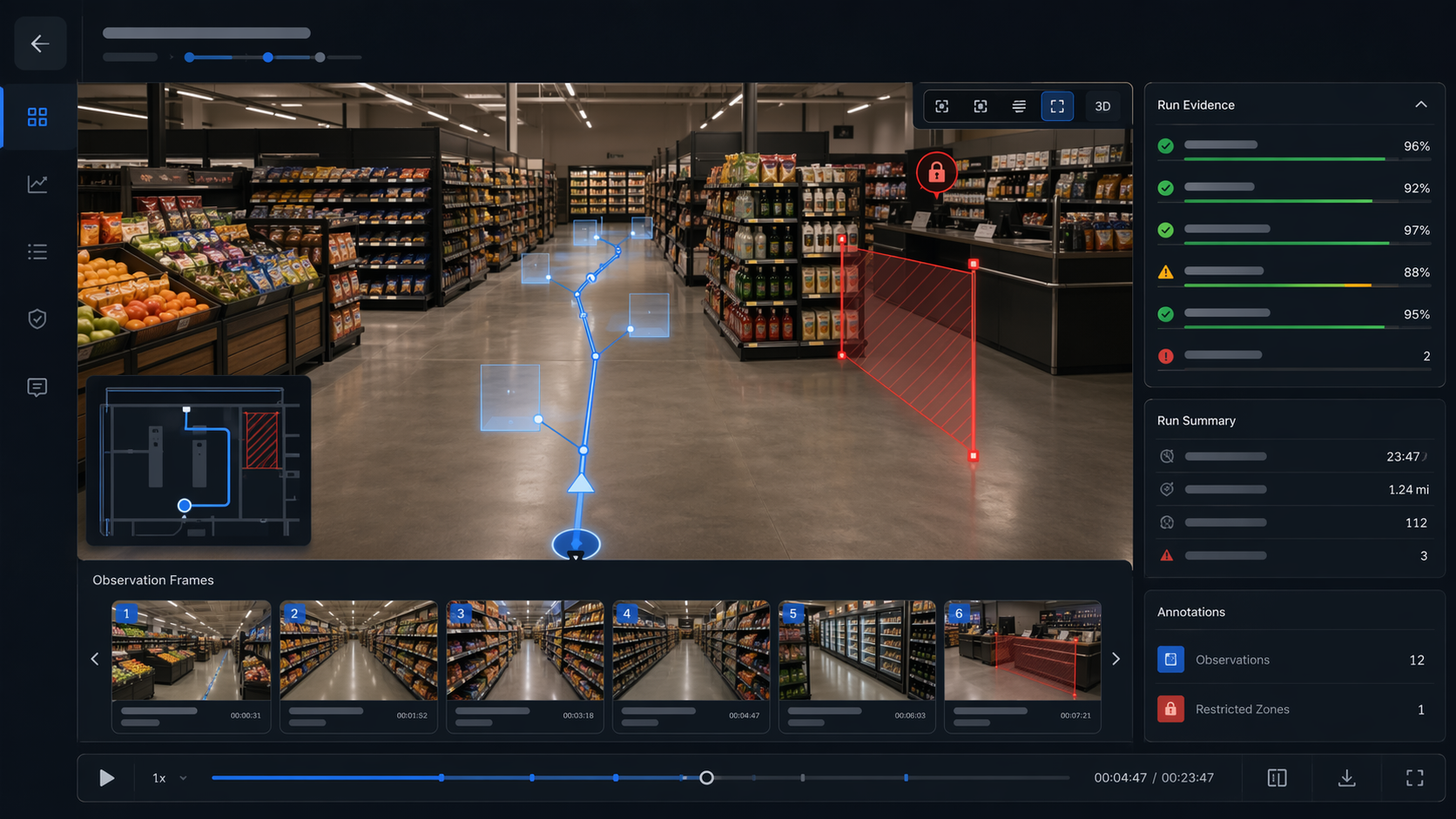

Blueprint opens a hosted evaluation session against the exact-site world model and records task outcomes, route behavior, observations, and limits.

Export

The buyer leaves with a review summary, run evidence, export framing, and a clear recommendation for what to do next.

Run evidence example

Export evidence

What leaves the session.

Commercial shape

Hosted evaluation sits between listing and commitment.

It is the managed eval path for one site-specific world model, not a generic benchmark console or deployment guarantee.

What you receive

Trust and fit

What this path is good for and what it does not claim.

What stays explicit

Hosted evaluation is not a deployment guarantee. Rights, privacy, restrictions, and export boundaries stay visible and irreversible commitments remain human-gated.

When this is a fit

Use this path when one real site already matters and the team needs run evidence before moving files around or sending people on-site.

Typical first reply

Public-listing and hosted-evaluation questions usually get a first reply within 1 business day. Rights or export review usually gets a first scoped answer within 2 business days.

Next step

Start with one world model and one workflow.

Browse the catalog, open truthful sample proof, or send the world model your team needs when it is not listed yet.